In this article:

- Why most persistent memory setups are over-engineered

- Knowledge work needs a different memory shape

- How I built persistent memory for Claude in three layers

- How to choose a memory architecture for Claude

- The same memory mismatch shows up in GTM stacks

- Where to start with Claude persistent memory

How do you give Claude memory that survives across sessions, when your work is knowledge work — analysis, decisions, strategy — and not coding? This post walks through the three-layer setup I run across four B2B SaaS GTM clients, why curated markdown beats vector databases for this kind of work, and how to choose between five common memory architectures.

Why most persistent memory setups are over-engineered

Out of the box, Claude is amnesiac between sessions. Every conversation starts from zero. If your setup doesn’t carry the important stuff from one session to the next, you’re re-explaining the same context every time, and the output is worse for it. So persistent memory matters.

But persistent memory is a category, not a solution. How you build it matters more than whether you build it — because how you build it has to match the type of work you do, and this is where most people get stuck. Engineers and non-engineers alike.

The default instinct is to reach for the most advanced solution: vector database, embeddings, RAG pipeline. Engineers reach for it because they can. Non-engineers reach for it because we assume the fancy thing must be the right thing — or we don’t realise simpler options exist at all.

For most of the work I do, the fancy version would be the wrong shape. Here is why, what I use instead, and how to tell which shape your own work needs.

A note before going further: I’m a fractional GTM consultant. I run B2B SaaS GTM engines — analysis, decisions, partner management, content, outbound, SEO. I work with four retainer clients at a time. Roughly 98% of my work happens inside Claude Code rather than in tools or UIs. The reason that’s possible at all is a persistent memory layer that lets me pick up where the last session left off, on every client, without re-explaining who they are.

Knowledge work needs a different memory shape

The right shape of memory depends on the shape of the work — and knowledge work isn’t one thing. The same term covers large-corpus retrieval, structured analysis, procedural execution, multi-hop relational reasoning, creative synthesis, and the judgment-heavy decision-making that compounds over months on the same set of facts. Each has a different memory shape that fits.

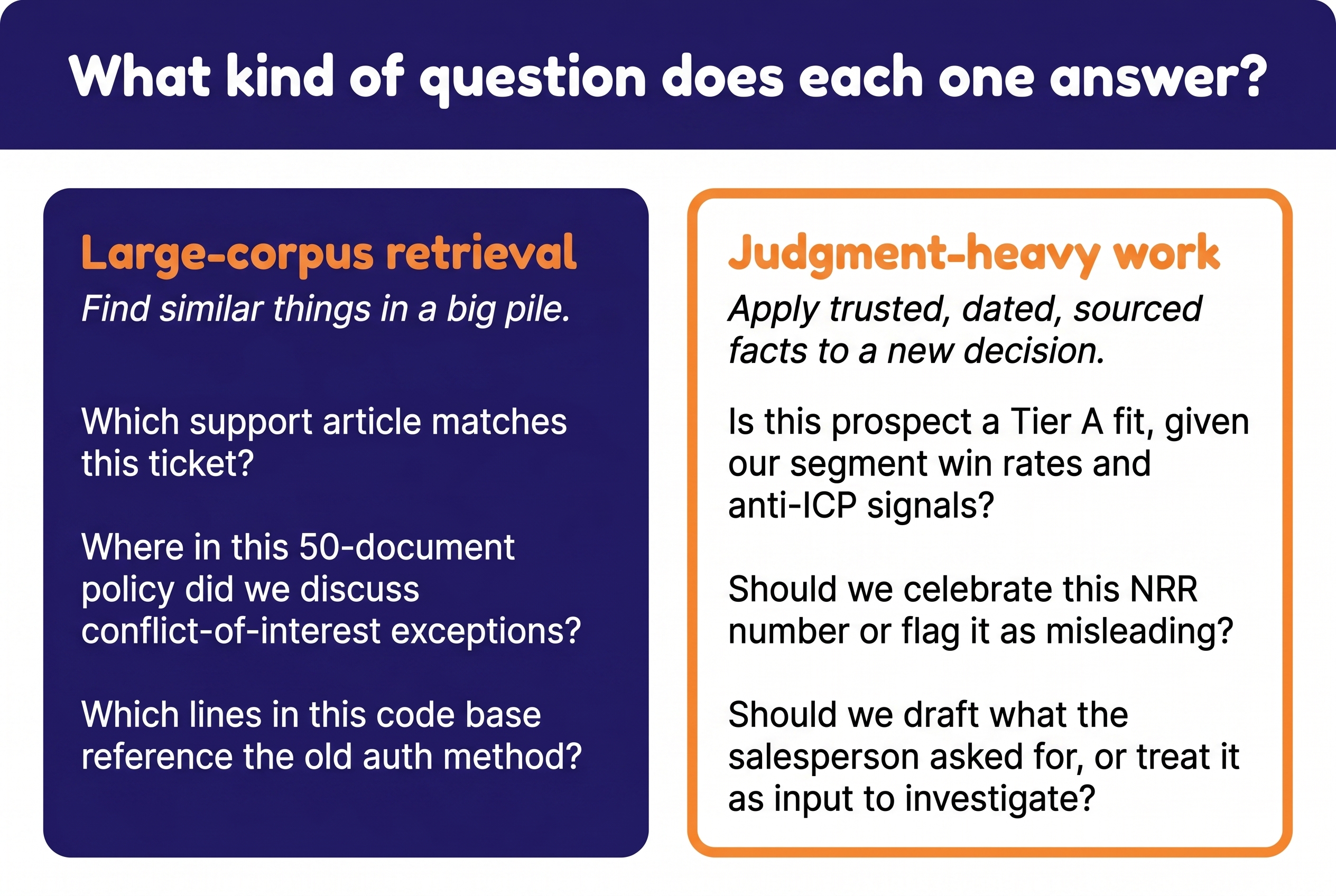

The two patterns worth contrasting first — because confusing them is what produces the vector-DB-by-default instinct — are large-corpus retrieval and judgment-heavy work. They look different.

Large-corpus retrieval. You have a lot of documents and you need to find the relevant slice for a specific question. “Which support article matches this ticket?” “Where in this 50-document policy did we discuss conflict-of-interest exceptions?” “Which lines in this code base reference the old auth method?” The work is find similar things in a big pile. Semantic similarity is exactly what you want. This is where vector databases and RAG actually shine.

Judgment-heavy work. You make decisions where new decisions build on previous decisions. The same fact has different weight in different contexts. What you don’t include matters as much as what you do. Source attribution, dating, and trust hierarchy decide whether a fact is a conclusion or just an input to investigate.

Most of what I do is judgment-heavy. A typical question: “Is this prospect a fit, given everything we know about this client’s ICP, the segment win rates from the last three years, the messaging that’s tested, and what’s already been ruled out?” That isn’t find similar things. That is apply trusted, dated, sourced facts to a fresh decision, in the right order.

A semantic search index over a pile of meeting notes and Slack threads cannot answer this. It will surface the closest-sounding text, regardless of whether the source is the CEO’s measured CRM data or a salesperson’s mid-call gut feel. Two facts can sound similar and have completely opposite weight — and the LLM has no way to tell, because the embedding doesn’t carry that.

You don’t fix this by tuning the retrieval. You fix it by changing the shape of the memory.

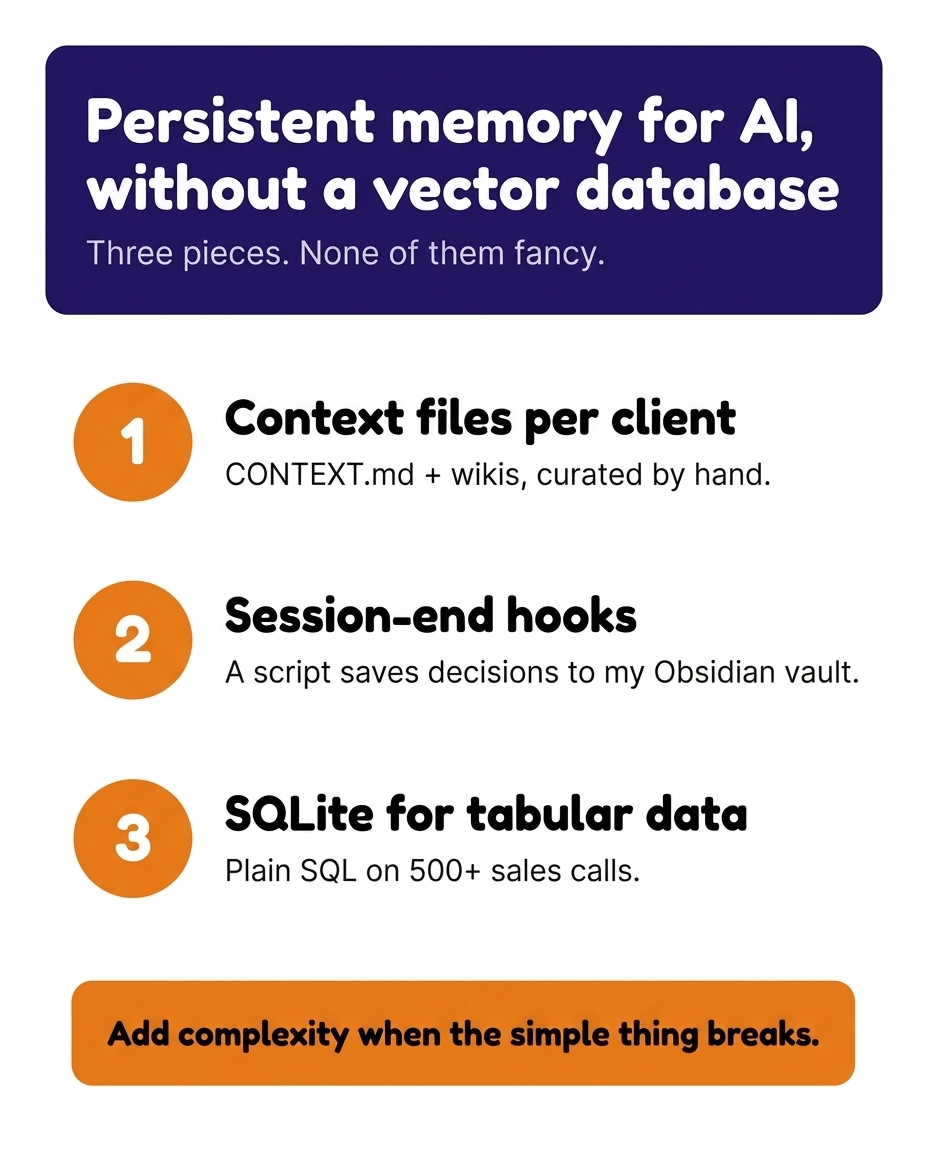

How I built persistent memory for Claude in three layers

This isn’t a finished system. It’s where I’ve ended up after a lot of small steps, and I’ll keep adding parts as the work gets bigger and the simple thing breaks. But what’s there now does the actual job.

1. Curated markdown context files and per-client wikis

Every client has two layers of curated markdown.

The top layer is a small set of context files — CONTEXT.md, business-profile.md, customer-intel.md, project-log.md, and a handful of others depending on the engagement. These are loaded at the start of every session. They carry what I need at hand on every task: who the client is, what the ICP looks like, what got decided recently, what’s open.

The deeper layer is a per-client wiki — history, open questions, prior research, decision logs, full analyses that I don’t need at every session but want one pointer away when the conversation goes there. The wiki is queryable on demand: a question like “what did we conclude about pricing for the manufacturing segment last quarter?” lands the LLM in the right wiki page rather than expanding the always-loaded top layer until it’s noise.

Both layers are hand-written and hand-pruned. Hand-pruning is the entire point. Choosing what goes in — and what stays out — is the actual work. No embedding pipeline can do that for me, because the judgment lives in what I choose to leave out, and that judgment is different at the top layer (what does the LLM need every time?) than at the wiki layer (what do I want to find again later?).

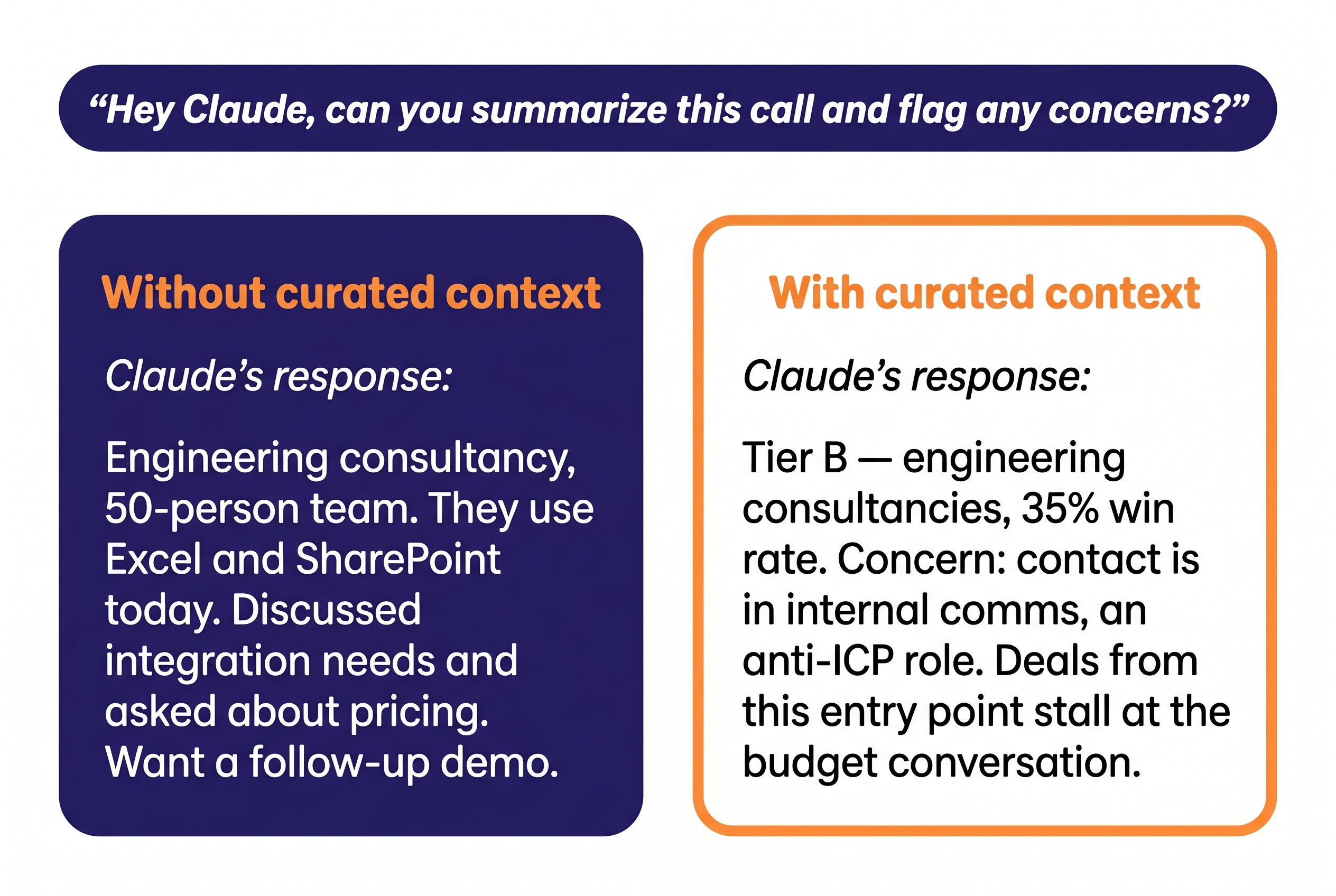

Without this layer. Ask Claude “summarize this call and flag any concerns” with no client context loaded, and you get a surface-level recap — engineering consultancy, 50-person team, uses Excel today, wants a follow-up demo. The “concerns” it flags are generic ones any LLM would surface, not ones drawn from your own patterns across actual deals. Ask “draft a cold email to [segment]” and you get an email that could have been written for any company, in any segment, in any year.

With this layer. Ask the same question and you get: “Tier B — engineering consultancies, 35% win rate. Concern: contact is in internal comms, an anti-ICP role. Deals from this entry point stall at the budget conversation.” Ask “draft a cold email to manufacturing” and the email is built around the messaging pillar that’s actually tested, with the entity-linking framing that’s pulled the strongest reactions in real calls, and not the angle you tried last quarter that converted at 0.3%.

A small example. The CRM has dozens of fields per contact, most of them uninformative or partially wrong. The ICP file in the top layer doesn’t carry the dump — it carries three lines:

“Tier A — Invest. IT consultancies 62% win rate (38 deals, 2024–2025). Anti-ICP signals: single-user buyer, ‘just need timesheets,’ procurement entering early.”

Three lines doing four jobs:

- Every claim sourced and dated.

- The segment named, with a measured win rate.

- The anti-patterns made explicit, so the LLM flags them — not the operator.

- The action implied: “Invest” — this segment is worth pursuing.

The supporting analysis lives in the wiki, one pointer away: the 60-deal CRM extract that paragraph compresses, the historical win/loss patterns, the segment-by-segment breakdown.

A vector DB could pull dozens of CRM rows that sound relevant and bury both the paragraph and the wiki page in noise. Curation is what makes the LLM useful.

How I keep it current.

- Top layer: a small set of skills propose updates whenever new material lands. A transcript routes its key facts to the right files. A financial update queues for the business profile. A workshop output gets added to the messaging file. I review proposals before applying.

- Wiki layer: a periodic staleness audit compares the last-maintained date against new sources, and triggers proposed additions for review.

- Discipline: curation is the actual work; the tooling just makes it lower-friction. If I let the hand-pruning slip, the layer rots within weeks — facts in stale frames, anti-patterns missing because the last two months of calls weren’t promoted, decisions still listed as “open” months after they closed.

This is the layer where most of the leverage lives. It’s also the one that gets skipped fastest — hand-pruned markdown looks too plain to take seriously next to anything fancier, and the over-engineering instinct that kicks in the moment someone says “AI memory” reaches for the fancier thing by reflex. The fancier thing usually doesn’t work as well. Start simple.

2. Session-end automation that carries the useful bits forward

The second part is a local hook script that fires at the end of each Claude Code session. It pulls out what got decided, what got opened, what got resolved, and files the right pieces into the right place per client. No copy-paste.

Decisions and open items are exactly the kind of thing that gets lost between sessions if you don’t carry them forward. They’re also the kind of thing you don’t want to dump into the next session whole — only the relevant subset, on the right client, in the right place. The hook doesn’t reason about importance; it just sorts and files. The next session loads the navigation file, and the navigation file knows the new decisions are there.

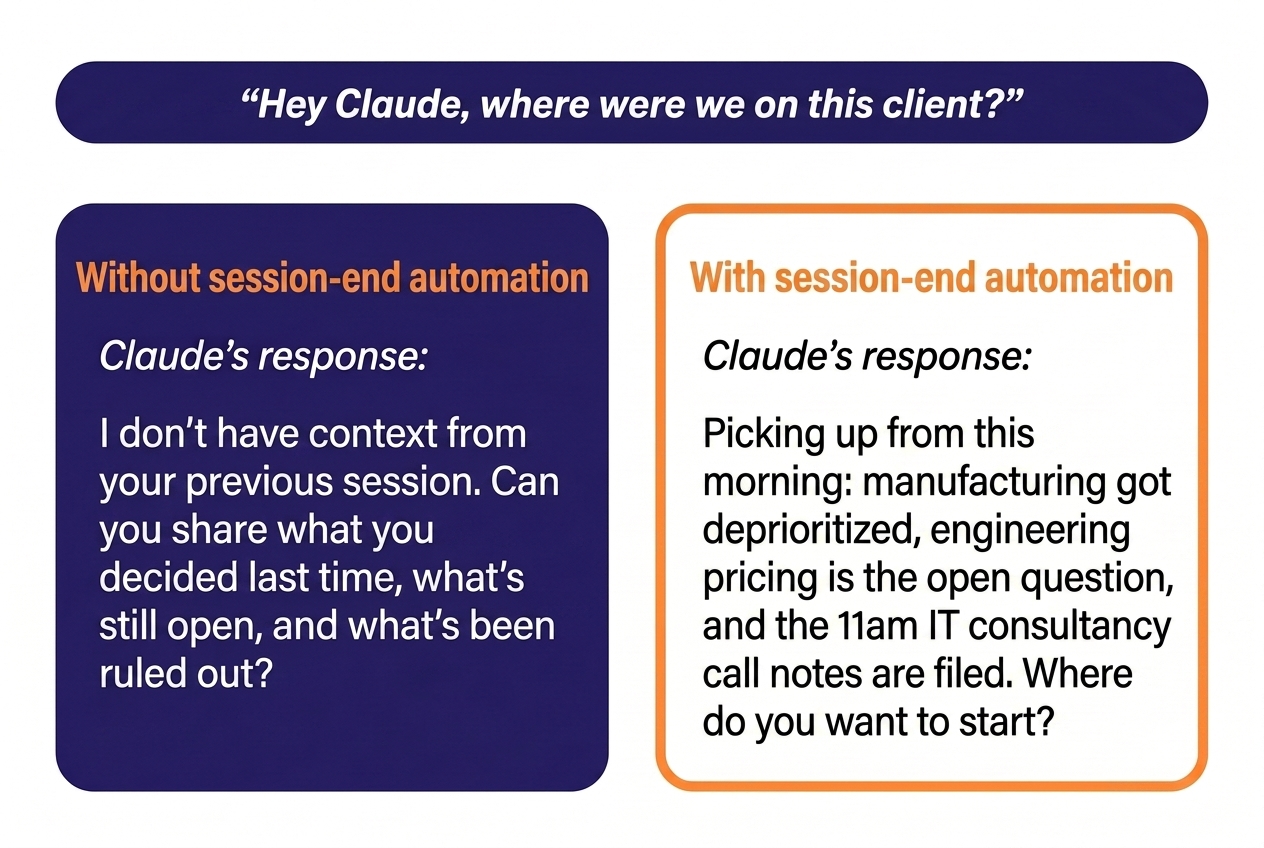

Without this layer. Start session two on the same client with “where were we?” and either re-explain what you decided three hours ago or risk Claude proposing the option you already ruled out. Multiply that across four clients and a few hundred sessions a quarter, and the cost of re-explanation is most of why “AI saves you time” turns out to be aspirational for a lot of people.

With this layer. Start session two and Claude already knows that the manufacturing segment got deprioritized this morning, that the open question on pricing for the engineering vertical is sitting in the queue, and that the call notes from the 11am with the IT consultancy prospect are filed. The next thing you say is the next thing you actually need to say, not the recap.

How I keep it current.

- The hook runs automatically at session-end. No daily maintenance.

- Destination files get pruned periodically: old decisions roll into the wiki, resolved questions archive, stale open items get either acted on or dropped.

- The extraction prompt has tightened over time as I’ve noticed which kinds of items it was missing or misfiling.

This is the boring layer. I added it last, after I got frustrated enough with losing context across sessions to bother. Nothing fancy. Runs locally.

3. SQLite for the genuinely tabular stuff

I have hundreds of sales call transcripts across clients, with extracted metadata — segment, deal stage, recurring objections, messaging that landed, anti-ICP signals. That’s rows and columns. It’s the closest thing in my setup to a “lots of documents” problem, and it’s where most people reach for a vector database next.

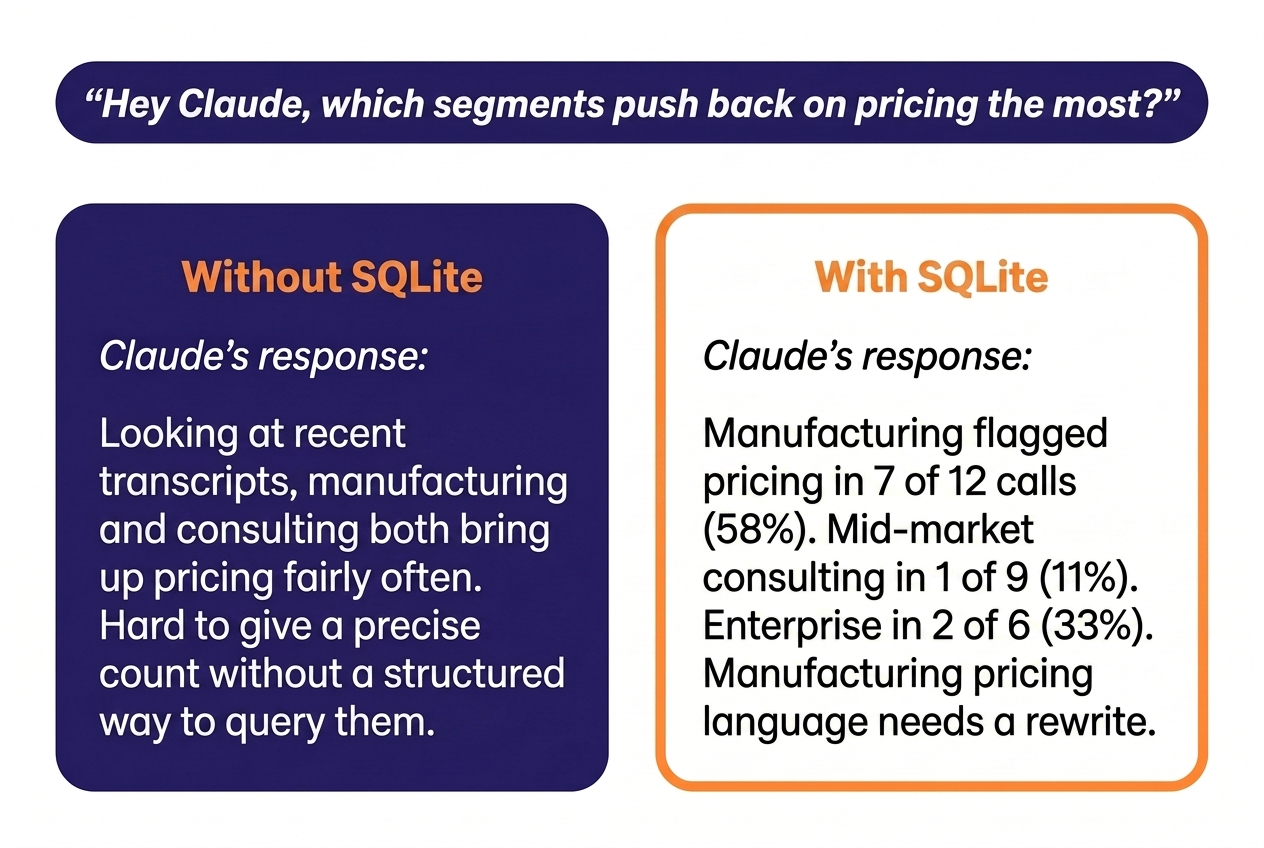

It’s also where a vector database would still be the wrong shape. The questions I actually ask are tabular: “Which objections come up most often with manufacturing prospects?” “How many calls in the last quarter mentioned the new regulation?” “Which segments respond best to the entity-linking framing?” Those are SQL questions. Plain SELECT … WHERE … GROUP BY answers them precisely. Semantic search would return the calls that feel most similar, which is not the same thing — and it would lose the precision a tag-based or filter-based query gives me.

Without this layer. Ask Claude “which segments push back on pricing the most?” with raw transcripts only, and you get a vibes answer based on which transcripts happened to land in context. Ask the same question with a vector DB over the transcripts, and you get the calls that sound like pricing objections — which is not the same set as the calls where pricing was actually flagged.

With this layer. Same question, precise answer: “Manufacturing flagged pricing in 7 of 12 calls last quarter; mid-market consulting flagged it in 1 of 9. Manufacturing pricing language needs a rewrite, mid-market doesn’t.” Same model, same data, but the data is in the shape that fits the question.

How I keep it current.

- New rows: an extraction routine pulls metadata fields from each new transcript — segment, deal stage, objections raised, messaging that landed, anti-ICP signals — and inserts them.

- New fields: the schema gets a new field when codification surfaces a new attribute worth tagging — usually when I notice the same pattern across enough calls to be a real signal.

- Why SQLite: queryable from Claude directly, lives next to the markdown layer, never needs a server.

The shape rule applies even here, in the part that looks the most like a corpus. Vector DB is right for “find me the thing that sounds like this.” It’s not right for “count and filter thousands of structured things.”

How to choose a memory architecture for Claude

The three layers each solve a different shape, and they’re chosen on that basis. Curated markdown for prose-shaped, judgment-heavy context. A small automation script for the bridge between sessions. SQLite for tabular, query-shaped data. Each layer is the simplest tool that fits its shape — and no layer is a vector database, because none of the shapes call for one.

That’s not because vector databases are wrong. It’s because they’re right for a different shape — and so are several other architectures I’m not running.

The eight common shapes, with examples and where each one breaks:

| Memory shape | Right when | Wrong when | Examples |

|---|---|---|---|

| No persistent memory (in-context only) | Each session is independent; the relevant context fits comfortably in the prompt; decisions don’t need to compound across sessions | You’re re-explaining the same context every session; output gets worse for it; the time cost of re-explanation outweighs the cost of building a memory layer | Default Claude or ChatGPT without memory features turned on |

| Built-in product memory | You want low-effort cross-session continuity for personal or casual use; you’re comfortable with the product choosing what to remember and how to surface it | You need to inspect, edit, version, or restructure what’s remembered; the product’s view of “important” doesn’t match yours; the trail needs to be auditable for professional work | Claude.ai’s auto-memory · ChatGPT memory |

| Hand-curated markdown context | Decisions build on previous decisions; the same fact carries different weight in different contexts; source attribution and trust hierarchy matter; you can hand-prune what goes in | The corpus is too large to curate by hand; freshness changes faster than you can update | Per-client business-profile.md and similar context files, plus a deeper queryable wiki per client (my pillar 1) |

| Session-end automation | Decisions, open items, and resolved questions need to carry forward between sessions on the same topic | The decision of what to file requires judgment the automation can’t make | A selective local hook (my pillar 2); Cole Medin’s compiler at the more aggressive end |

| Tabular / SQL | Count, filter, group across structured rows; the questions are precise and known in advance | The questions are about meaning, not match patterns; the data is unstructured prose | SQLite or DuckDB over call transcripts with extracted metadata (my pillar 3) |

| Agent-curated markdown wiki | The knowledge base has outgrown what one person can curate, but the content is still prose-shaped — Karpathy reports this works at roughly 100 articles and 400K words via auto-maintained index files — and you’re willing to let the LLM do the curation | You need source attribution and trust hierarchy to be human-decided; the locus of curation shifts to the LLM here, and that is the trade-off you’re making | Andrej Karpathy’s personal LLM-maintained wiki |

| Knowledge graph with typed relationships | The relationships between facts are stable and worth encoding as typed edges (causes, fixes, contradicts, supersedes); the work is multi-hop relational reasoning over a structured fact-graph | The relationships are still in flux as data accumulates; the work is mostly prose retrieval and judgment rather than reasoning over edges; the formality of a schema is more overhead than benefit | Mem0 · Zep / Graphiti · Cognee · Neo4j-backed agent memory |

| Vector DB / RAG | Find semantically similar content in a large unstructured corpus you can’t pre-curate — retrieval is the work | Decisions need source attribution; structured queries exist; the corpus is small enough for any other shape | Customer support over thousands of help-center articles; cross-codebase semantic code search |

Two rows deserve a closer look — the trade-offs are sharper than the table can carry.

Session-end automation is a spectrum, not a single point. The version I run is selective — the hook files specific decisions and open items I want to carry forward. Cole Medin has built the more aggressive cousin: a compiler that captures every Claude Code session via hooks and lets the LLM decide what’s worth promoting into a wiki.

Different point on the precision-versus-coverage trade-off. His approach is right when the dark matter — the dead-ends, the sub-threshold decisions, the things human curation drops — is worth the noise it adds. For per-codebase memory where understanding why a dead-end was a dead-end matters six months later, that trade-off is often correct. For client GTM context where source attribution decides whether a fact is a conclusion or just an input to investigate, it isn’t.

The agent-curated wiki row carries a similar trade-off, one layer up. Andrej Karpathy is explicit: in his setup, the LLM writes and maintains the wiki, and he rarely touches it directly. The LLM also runs periodic lint cycles — finding inconsistencies between articles, imputing missing data, suggesting new article candidates as the corpus grows.

That’s the right call for a personal research library where breadth beats depth. It’s the wrong call for the work I do, where what I choose to leave out of a client’s context is the part that makes the LLM useful at all. Same architecture, opposite curation locus. The shape of the work picks which side you want to be on.

If my work shifted — if I started writing across thousands of long-form documents I couldn’t curate, or doing semantic discovery across a corpus too large to navigate by pointers, or reasoning over a stable graph of typed relationships — the shape would change, and the tool would change with it. That’s the right reason to add a vector layer, hand curation over to the LLM, or stand up a graph DB. Not because any of these is the default sound of the word “memory.”

The same memory mismatch shows up in GTM stacks

This isn’t a GenAI problem. It’s a match the tool to the actual work problem, and B2B SaaS GTM stacks are full of the same mismatch.

Companies with 40 customers buying automation platforms built for 40,000. Multi-touch attribution models running over a pipeline you can count on your fingers. Outbound sequencing tools licensed before there’s a validated ICP to sequence against. Dashboarding stacks priced for a reporting team of six, run by a marketing manager who opens them once a week.

The tool isn’t the problem. The mismatch between the tool and the actual work is. The same instinct that makes a non-engineer reach for “vector DB” because it sounds right also makes a five-person GTM team license Clay + Apollo + Outreach + 6sense + Gong before they’ve nailed who they’re selling to. Buying the fancy thing feels like progress. It’s how almost every over-built GTM stack gets its first three layers.

The fix is the same in both places: start by naming the shape of the work, then pick the simplest tool that fits that shape. Add complexity when the simple thing breaks — not before.

Where to start with Claude persistent memory

None of what I run today is the end state. Persistent memory will need more parts as the work scales — more clients, more session volume, more places where the simple thing starts to break. I’ll add what’s needed when it’s needed. Probably some of those additions will be embedding-based; the question is whether they’re matched to a shape that actually requires them.

The starting point should be the simplest thing that fits the shape of the work. The fancy version is almost never the right starting point. You add complexity when the simple thing breaks.

One last honest note: none of this runs itself. No memory system, no matter how well architected, can replace the human judgment that decides what to codify, what to leave out, and when something’s gone stale. The architecture does the heavy lifting — once a fact is curated, it stays put; once a decision is filed, it carries forward — but I still have to remember to use it, and to run periodic health checks on the system, the content, and the memory itself. The system isn’t a substitute for discipline. It’s a multiplier on it.

I don’t use AI to replace thinking. I use it to never think the same thing twice. That’s only possible if the memory layer underneath is shaped for the kind of thinking you actually do — and if I keep showing up to maintain it.

Persistent memory is one layer of the GTM context OS that lets a fractional GTM lead run multiple B2B SaaS engines from inside Claude Code. For how the whole thing fits together, see also the AI workflows that ride on top of it.

Anna Ursin

Fractional GTM lead for B2B SaaS companies under €5M ARR. I help founders build go-to-market engines that actually connect to pipeline — instead of random acts of marketing. More about me